Artificial Intelligence (AI) is an integral part of our present-day technology landscape. At Sourcetoad, we’ve been assisting our clients for years in leveraging this powerful tool. However, it’s become more important than ever to have an internal policy on how these tools can be utilized by your team.

Here’s a quick summary of ours—we hope it is helpful and informative.

Our Policies

Recognizing the need for responsible AI usage, we adhere to guiding principles that ensure predictable, testable, and traceable use of AI in our operations.

1. Responsibility for AI-generated work:

Any output from an AI system we present as our own work remains our responsibility.

- Even though our AI-based systems may generate work before human review, all staff members are obligated to thoroughly check the AI-produced results.

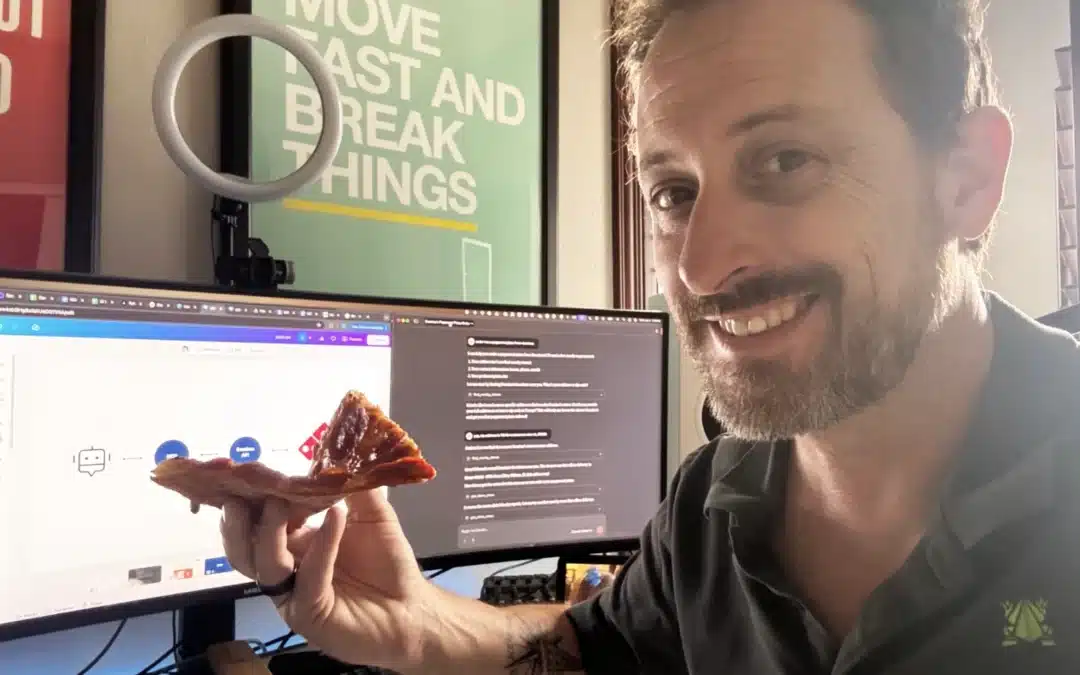

- This applies across the board, from code drafting and even in the creation of more whimsical content, like AI generated images of toads writing code we use in internal presentations.

- As the AI system ChatGPT aptly puts it, it may produce inaccuracies, thus underlining the importance of human oversight.

2. Maintaining Privacy:

We safeguard all sensitive and private information.

- We never send sensitive data to unvetted AI systems, regardless of their privacy policies. This includes personally identifiable information, proprietary code, and datasets that our clients would not want competitors to access.

- Furthermore, all interactions with AI systems are conducted over secure SSL connections to uphold data security.

- We recommend data classification as a strategy for what parts of the system can be sent to a 3rd party and compare it to the privacy policy of the platform we are utilizing.

3. Respecting Intellectual Property

We pay attention to licensing requirements.

- It is critical to remember that AI-generated output cannot be simply incorporated into a system without due review.

- Internal management and clients are actively involved in this review process to ensure all intellectual property rights are respected.

4. Addressing AI Biases:

AI is not exempt from biases, and it’s crucial to address these.

- Even if AI is used merely as a source of inspiration, its influence may carry inherent biases that should not be perpetuated if they contradict the values of our teams.

5. Prioritizing Testing:

We focus on rigorous testing, both manual process and automated wherever possible.

- For example, we advocate the use of diverse datasets to avoid unintentional biases in the models.

- In addition, we have run across several instances where AI will produce false information, or hallucinations. Having a testing process in place for this is paramount.

Sourcetoad recognizes the immense potential AI offers while keeping focus on Traceability, Predictability, and Testability of any AL or ML usage.

Sourcetoad’s Internal Usage of AI Explained

We use AI internally to drive team efficiency.

Chat GPT

That GPT assists us in drafting content like emails and proposals, adhering to stringent data confidentiality standard

GitHub Copilot

GitHub Copilot is another tool we utilize to enhance productivity, supporting our engineers with tasks such as code completion, code consistency, and refactoring. Think of this like a “code autocomplete.”

Zoom and Google Meet

Currently, while we use Zoom and Google Meet with Closed Captions functionality, we refrain from using external voice transcription and summation services to ensure data privacy and confidentiality.

We are living in a very exciting time for AI, with more AI-enabled tools becoming available almost every day. As more businesses embrace these technologies, we encourage you to consider adopting your own internal guidelines to protect your clients as well as your employees. We hope Sourcetoad’s policy will help guide you as you shape policies for your own organization.